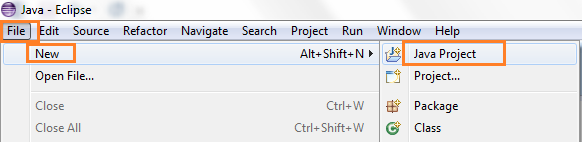

- Write a MapReduce Java program and bundle it in a JAR file. You can have a look in my previous post how to create a MapReduce program in Java using Eclipse and bundle a JAR file “First Example Project using Eclipse“.

- Client submit the job to the JobTracker by running the JAR file ($ hadoop jar ….). Actually the driver program (WordCountDriver.java) act as a client which will submit the job by calling “JobClient.runJob(conf);“. The program can run on any node (as a separate JVM) in the Hadoop cluster or outside cluster. In our example, we are running the client program on the same machine where JobTracker is running usually NameNode. The job submission steps includes:

Hadoop

The Hadoop Ecosystem Table

List of major projects/tools surrounding hadoop with their category which build up Enterprise Data Platform. It is growing at a rapid pace to keeping in mind three Vs of Big Data: Volume (Big), Velocity (Fast) and Variety (Smart). Find the table below:

Apache Hadoop Installation and Cluster Setup: Part-3

Let’s start with HadoopNameNode (master), repeat this for SNN and 2 slaves. Connect to HadoopNameNode through PuTTY and follow the commands.

Update the packages and dependencies

| $ sudo apt-get update |

Install Oracle Java

Install the latest Oracle Java (JDK) 7 in Ubuntu

Setting up Client Access to Amazon EC2 Instances: Part-2

To prepare to connect to a Linux instance from Windows using PuTTY

1. Download and install PuTTY and PuTTYgen from here.

2. Start PuTTYgen (from the Start menu, click All Programs > PuTTY > PuTTYgen)

Setting up infrastructure with Amazon EC2: Part-1

If you’ve already signed up for Amazon Web Services (AWS), you can start using Amazon EC2 immediately. You can open the Amazon EC2 console, click Launch Instance, and follow the steps in the launch wizard to launch your first instance.

Get Amazon **FREE** AWS Account

If you do not already have an account, please create a new free one. Amazon EC2 comes with eligible free-tier instances. Please find the below free-tier usages for your reference. For more information, see AWS Free Tier

Setting up Hadoop Cluster on Amazon Cloud

I wanted to get familiar with the big data world, and decided to test Hadoop on Amazon Cloud. It was a really interesting and informative experience. The aim of this blog is to share my experience, thoughts and observations related to both practical and non-practical use of Apache Hadoop.

Overview

A typical Hadoop multi-node cluster